The Mystery of Consciousness

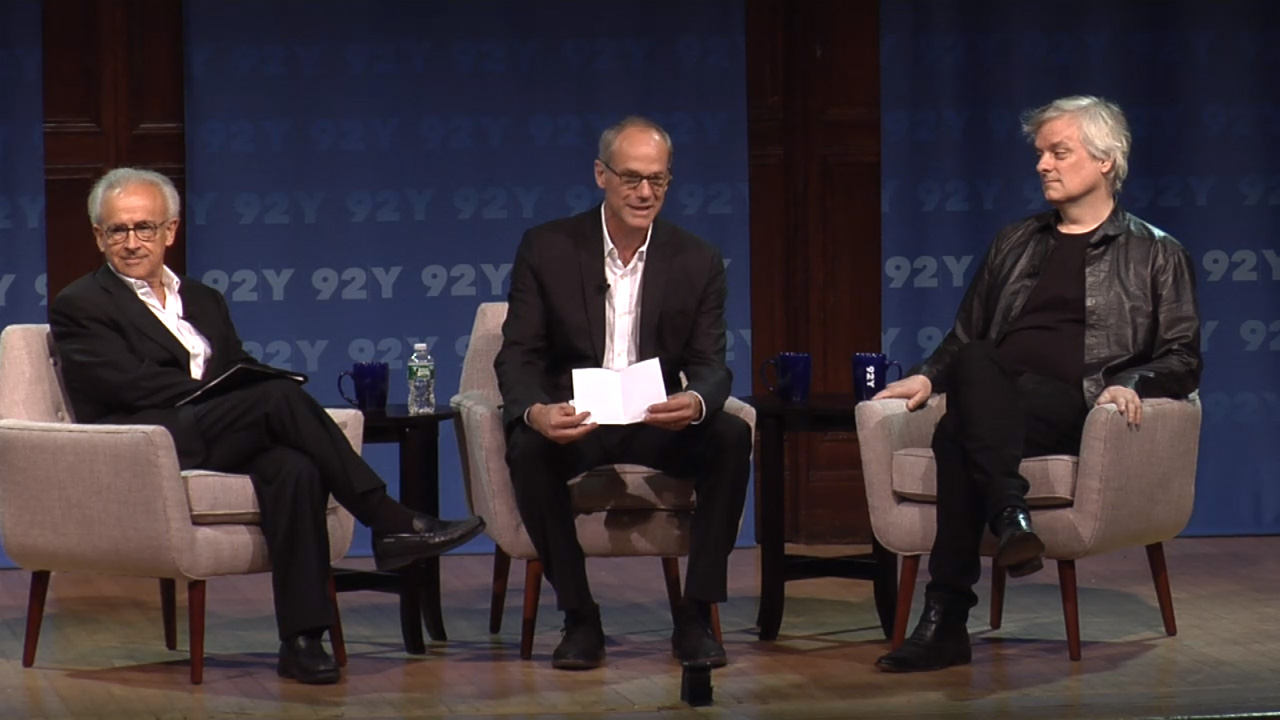

Antonio Damasio & David Chalmers,

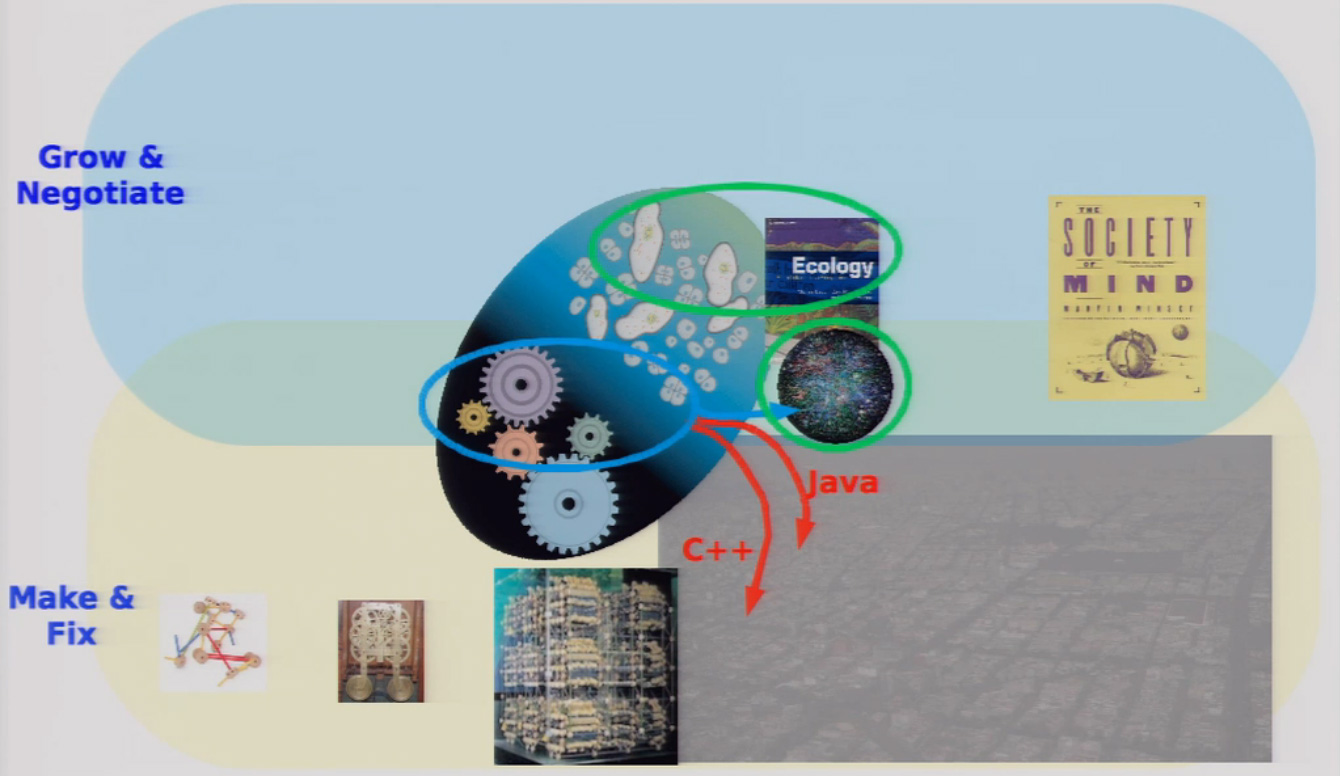

PhilosophyWhen one does the job of science which is to break things apart in order to understand the mechanism, we have to be very careful to put the mechanism back

Neuroscientist Antonio Damasio and philosopher David Chalmers entertain a wonderful and nuanced conversation about the nature of consciousness.